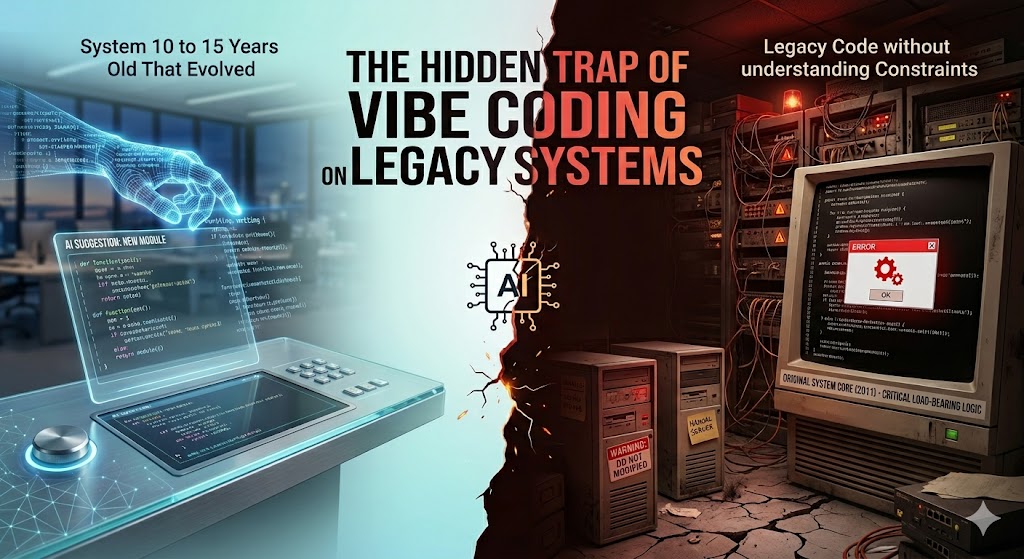

The rise of AI-assisted development or “Vibe Coding” has fundamentally changed how we build software. Tools like GitHub Copilot allow developers to describe a feature and watch as blocks of logic materialise. For greenfield projects, it’s a superpower.

But when you point that same “vibe” at a 10 to 15-year-old enterprise system, you aren’t just coding; you’re walking through a minefield with a blindfold on.

The Conflict: Modern Standards vs. Historical Context

Legacy systems are often viewed through the lens of modern “technical debt.” We see clunky patterns, deprecated libraries, and manual memory management, and our first instinct is to “fix” it. However, applying today’s coding standards to a system built in 2011 can be the kiss of death.

Systems that have survived a decade or more evolved within specific framework quirks and infrastructure constraints that no longer exist today:

- Memory Constraints: Code might have been written to avoid overhead that modern hardware ignores but older server environments require.

- Execution Order: Older frameworks often relied on specific, sometimes undocumented, synchronous execution flows.

- Side Effects: In legacy monoliths, a “bad” pattern in one module is often the load-bearing pillar for three other systems.

Why “Vibes” Fail in the Basement

Vibe coding works on probabilistic patterns. It suggests what “usually” comes next based on billions of lines of (mostly modern) open-source code.

When an AI agent looks at a legacy snippet, it sees an “error” or an “outdated pattern” and suggests a “cleaner” modern alternative. What it doesn’t see are the invisible constraints:

- The Hidden Dependency: That “redundant” check might be preventing a race condition in a 32-bit environment the AI doesn’t know you’re still using.

- The Framework Quirk: Older versions of .NET, or Spring had specific behaviors (or bugs) that developers had to code around. Fixing the code without upgrading the entire stack breaks the workaround.

- Irreversible Cascades: A small modification in a legacy core can trigger catastrophic data loss or system-wide latency that isn’t caught by modern unit tests, because the legacy system likely lacks the coverage to catch it.

The Golden Rule: Explain, Don’t Edit

Does this mean AI has no place in legacy environments? No. It just means we need to shift the goalpost.

- AI for Archaeology: Use Copilot to explain what a complex, undocumented 500-line function is doing. It is an incredible tool for translating “COBOL-style Java” into plain English.

- The Modification Taboo: Letting AI create modifications or inject new code into a legacy core is a high-risk gamble. Without the “tribal knowledge” of why the system was built that way, the AI is just guessing, and in a high-stakes live production environment, guessing is dangerous.

Final Thought

Legacy systems are not just “old code.” They are the proven, battle-hardened foundations of our businesses. They require surgical precision, not a “vibe check.”

If you’re working on a system that predates the iPad, use AI to read the map, but keep your own hands on the wheel. The risk of a “clean” but catastrophic failure is simply too high.

© Rowan Tree Scientific Limited All rights reserved.