Robert Solow’s observation that “you can see the computer age everywhere but in the productivity statistics” has a modern echo. Organisations pilot AI tools, users report saving hours per week, and yet the annual accounts show no corresponding line item. Boards grow sceptical. Finance asks where the savings went. Project owners point to efficiency gains that everyone can feel but nobody can bank.

This is the commercial reality of most enterprise AI deployments. The savings are real. The savings are also invisible to standard accounting. That gap is not an argument against measurement; it is an argument for better measurement. The question is how to build an ROI architecture that captures value your general ledger cannot see, and does so in a way that a board, an auditor, and a sceptical CFO will accept.

The Central Problem: Efficiency Created Versus Efficiency Captured

When an AI tool saves a clinician twenty minutes on documentation, three things can happen. The clinician sees an additional patient, the time converts to throughput, and revenue or service volume rises. The clinician does the same work with lower cognitive load, quality improves, error rates fall, and downstream costs decline. The clinician absorbs the time back as slack, and nothing observable changes.

Only the first produces a line item. The second produces value but requires outcome instrumentation to prove it. The third produces efficiency created but not efficiency captured, which from a board’s perspective is indistinguishable from waste.

This is the real problem. Most organisations do not lack savings; they lack the operational discipline to convert efficiency into something the business can book. Any ROI framework for AI has to address both halves: how efficiency is measured, and how the organisation commits in advance to capturing it.

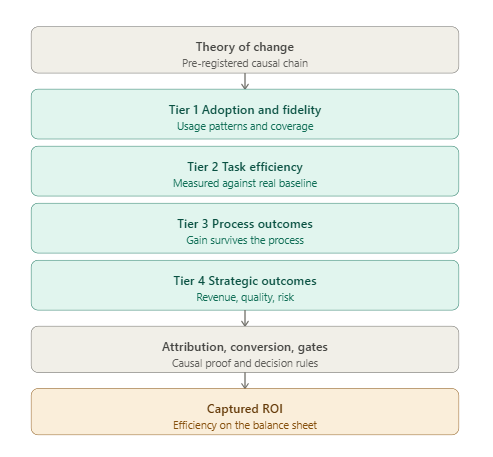

Start With a Theory of Change, Not a Business Case

Traditional ROI models start with projected savings and work backwards. For AI, this is the wrong entry point, because the savings are a function of organisational response, not the tool itself.

A better starting point is the theory of change: a pre-registered causal chain from the AI capability to the organisational outcome. For each deployment, the sponsor should articulate four things. What specific tasks or decisions does the AI alter? What changes in behaviour is that alteration expected to produce? What organisational structures must exist for those behaviour changes to convert to captured value? And what does the counterfactual look like?

This last point is where most AI business cases fall apart. If you cannot describe what would have happened without the tool, you cannot defensibly claim what happened because of it.

A Tiered KPI Architecture

For AI tools with opaque savings, a single ROI figure is the output of the system, not an input to it. The measurement architecture should be tiered, with each tier answering a different question and providing evidence for the tier above it.

Tier 1: Adoption and fidelity. Is the tool being used, by whom, on what tasks, with what prompt patterns? These are leading indicators. Without them, nothing else matters. With them, you have the minimum condition for value generation.

Tier 2: Task-level efficiency. For the specific tasks the theory of change identified, what is the change in time-to-complete, error rate, revision cycles, or cognitive load? These should be measured against a genuine baseline, not a user-reported estimate. If you did not measure the baseline before deployment, you do not have a baseline.

Tier 3: Process-level outcomes. Does the task-level gain propagate to the process in which the task sits? A ten-minute saving per document is worthless if the document then sits in a queue for three days before the next step. Process-level KPIs test whether efficiency survives its journey through the organisation.

Tier 4: Strategic outcomes. Does the process-level improvement affect the metrics the board actually cares about: throughput, revenue per head, service quality, time-to-decision, regulatory exposure? This is where efficiency created becomes efficiency captured, or fails to.

The architecture is diagnostic by design. If Tier 1 is healthy and Tier 4 is flat, the problem is somewhere in Tiers 2 and 3, and the investigation is focused rather than speculative.

Attribution Discipline

The single largest failure mode in AI ROI measurement is confusing correlation with causation. Deploy an AI tool, observe that a metric improved, and claim the improvement. Anyone with a research background will recognise this as unacceptable, yet it is the norm in most enterprise AI reporting.

Serious attribution requires one of three structures. A holdout group that does not receive the tool and can be compared over the same period. A staggered rollout across comparable units, so earlier and later adopters can be compared. Or a natural experiment, such as a period when the tool was unavailable, that creates a defensible counterfactual.

None of these are exotic. They are standard in clinical trials, policy evaluation, and marketing analytics. Their absence in AI deployments is a choice, and usually a bad one. For boards, the presence of an attribution design is a stronger signal of project seriousness than any projected ROI number.

Converting Efficiency Into Captured Value

Once you can defensibly measure efficiency, you face the conversion problem: how to express it in financial terms that belong in a business case.

For some contexts, direct conversion works. Time saved by a billable worker converts at the billing rate, net of utilisation. Errors prevented convert at the average remediation cost. Throughput increases convert at contribution margin per unit.

For others, you need shadow pricing. A reduction in regulatory documentation burden has no direct market price, but it has an opportunity cost equal to the alternative use of that capacity. A reduction in decision latency has a value that can be estimated from the options it preserves or the risks it avoids.

The rule is simple: if you cannot state the conversion formula in advance, you do not have an ROI, you have a hope. Every AI deployment should have its conversion logic written down before go-live, reviewed by finance, and locked in the business case. Post-hoc conversions are unfalsifiable and should be treated accordingly.

Gates and Sunset Criteria

A measurement architecture without decision rules is theatre. The ROI framework should specify, in advance, what levels at each tier would trigger continued investment, what levels would trigger redesign, and what levels would trigger sunset.

Pre-registered gates protect the organisation from two failure modes. The first is sunk-cost commitment, where tools that have demonstrably failed continue because nobody wants to call them. The second is premature celebration, where early adoption metrics are used as evidence for outcomes that have not yet been tested.

Boards should expect gates to be written into the original business case and reviewed at pre-specified intervals. The absence of sunset criteria is itself a governance failure.

The Strategic Implication

For executives, the takeaway is not that AI ROI is hard to measure. It is that the measurement architecture is the ROI strategy. Organisations that deploy AI tools without baseline instrumentation, attribution design, conversion logic, and pre-registered gates are not deploying AI; they are purchasing hope and hoping it compounds.

The organisations that capture AI value are not the ones with the best models or the largest deployments. They are the ones that treat measurement as a first-class engineering problem, invest in it before the tool goes live, and commit in advance to what success and failure look like.

When cost savings are not transparent, the answer is not to abandon ROI. It is to recognise that transparency is a property you build into the deployment, not a property you wait to discover after the fact. Build it in, and the ROI question answers itself. Leave it out, and no model, however capable, will save you from a board that wants to know where the money went.